MASV and LucidLink Integration via AWS: Architecture and implementation guide

Introduction and purpose

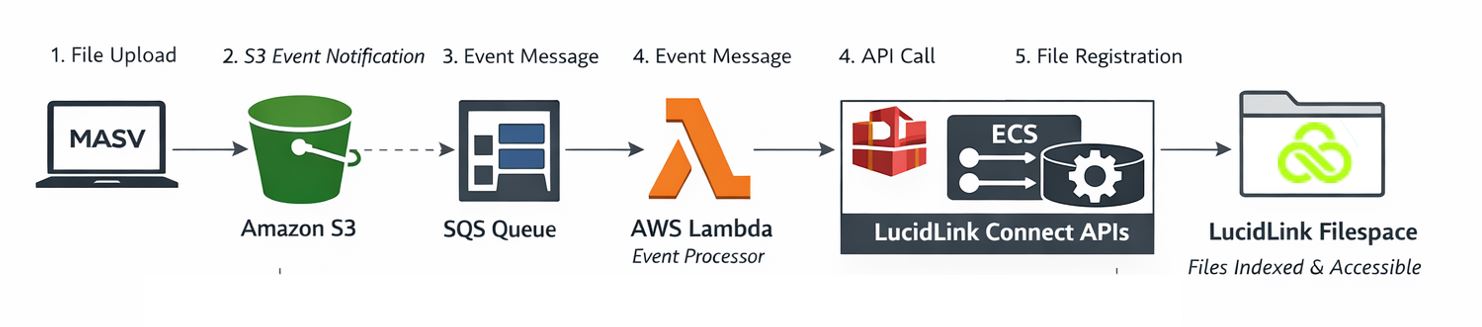

This document describes a cloud-native architecture that enables files uploaded through a MASV Portal to be automatically ingested and made accessible within a LucidLink Filespace. Combining MASV Portals with LucidLink Connect enables seamless, high-speed ingestion of large files directly into cloud-backed, instantly accessible LucidLink workspaces.

The solution leverages Amazon S3 as the underlying storage layer, with AWS event-driven services orchestrating the integration between MASV and LucidLink Connect APIs.

The primary objective of this architecture is to provide a seamless and automated pipeline in which externally uploaded files are immediately available within LucidLink without requiring manual intervention. MASV is responsible for high-performance file transfer and user interaction, while AWS provides the event processing and integration logic, and LucidLink provides indexed, filesystem-style access to the data stored in S3.

This approach aligns with modern cloud design and composable architecture principles by cleanly separating ingestion, storage, and indexing across purpose-built components.

High-level architecture and flow

At a high level, the solution operates as an event-driven pipeline triggered by file uploads. When a user uploads content through a MASV Portal configured with an S3 integration, the files are written directly into a designated S3 bucket. This bucket serves as the authoritative storage location for all uploaded objects.

Amazon S3 is configured to emit ObjectCreated events whenever new files are written. These events are not sent directly to compute services; instead, they are delivered to an Amazon SQS queue. This design introduces a durable buffering layer that decouples file ingestion from downstream processing, improving reliability and scalability.

An AWS Lambda function is configured to consume messages from the SQS queue. For each event, the Lambda function extracts the relevant object metadata, including bucket name and object key, and constructs a request to the LucidLink Connect API. This API call registers the new file within a LucidLink Filespace, making it immediately visible to users accessing LucidLink.

The LucidLink API is hosted within an Amazon Elastic Container Service (Amazon ECS) service running a containerized instance of the LucidLink Connect API. Communication between Lambda and this API occurs entirely within a Virtual Private Cloud (VPC) using AWS Cloud Map for service discovery, ensuring that no public endpoints are exposed.

Amazon S3 configuration

Amazon S3 serves as the central storage layer in this architecture and is the destination for all MASV uploads. The MASV Portal is configured with an S3 integration such that uploaded files are written directly into a specified bucket without intermediary processing.

To enable downstream automation, the S3 bucket is configured with event notifications for all object creation events. These notifications are directed to an Amazon Simple Queue Service (Amazon SQS) queue rather than directly invoking compute services. This indirection is critical for ensuring reliable event delivery and supporting retry mechanisms.

In order for S3 to publish messages to SQS, an explicit access policy must be applied to the queue. This policy grants the S3 service principal permission to send messages, scoped to the specific bucket Amazon Resource Name (ARN) and AWS account. Without this configuration, event delivery will fail silently.

Amazon SQS as the event buffer

Amazon SQS provides a durable, decoupled messaging layer between S3 and Lambda. When S3 emits an ObjectCreated event, the message is placed onto the main queue, where it awaits processing.

The use of SQS ensures that transient failures in downstream services do not result in lost events: Messages remain in the queue until successfully processed, and failed messages can be retried automatically. A Dead Letter Queue (DLQ) is configured alongside the main queue to capture messages that cannot be processed after repeated attempts, enabling operational visibility and troubleshooting.

The queue also enforces controlled consumption by Lambda, allowing the system to handle burst uploads from MASV without overwhelming downstream services.

AWS Lambda processing and logic

The AWS Lambda function acts as the orchestration layer that bridges AWS events with the LucidLink API. It is triggered by messages arriving in the SQS queue, typically processing one message per invocation to maintain simplicity and traceability.

Upon execution, the function parses the SQS payload to extract the underlying S3 event. It identifies the bucket and object key associated with the newly uploaded file and uses this information to construct a request to the LucidLink Connect API.

The Lambda function is configured with several critical environment variables that define its behavior. These include the base URL of the LucidLink API, identifiers for the target datastore and Filespace, and parameters controlling how file paths are mapped. For example, path preservation ensures that the folder structure in S3 is reflected accurately within LucidLink, while directory creation flags ensure that missing paths are created automatically.

From a networking perspective, the Lambda function is deployed within the same VPC as the ECS service hosting the LucidLink API. This allows it to resolve and communicate with the API endpoint using an internal DNS name provided by AWS Cloud Map. The Lambda execution role must also include permissions for SQS consumption, CloudWatch logging, and VPC networking operations such as elastic network interface management.

ECS and LucidLink API container

The LucidLink Connect API is deployed as a containerized service within Amazon ECS using AWS Fargate. This service is responsible for receiving API calls from the Lambda function and registering files within a LucidLink Filespace.

The ECS service runs a task based on a task definition that specifies the container image, resource allocation, and networking configuration. The container exposes an HTTP endpoint on port 3003, which is used by the Lambda function to submit file registration requests.

To enable internal service discovery, the ECS service is integrated with AWS Cloud Map. This allows the service to be addressed via a stable DNS name within the VPC, eliminating the need to manage dynamic IP addresses. The configuration defines a namespace and service name, resulting in an endpoint such as lucidlink-api.masv-aws, which is referenced by the Lambda function, and stored in environment variables.

Security groups applied to the ECS service restrict inbound traffic to internal VPC sources on the required port, while outbound traffic is permitted for HTTPS communication. This ensures that the API is not publicly accessible while still allowing it to interact with external services if required.

Networking and security model

A key design principle of this architecture is that all service-to-service communication occurs within a private VPC. The LucidLink API is not exposed to the public internet; instead, it is accessed internally by the Lambda function using private DNS resolution.

The Lambda function is configured with subnet and security group settings that allow it to communicate freely within the VPC. Its security group typically permits all outbound traffic, while the ECS service security group restricts inbound traffic to trusted internal sources and the specific API port.

This approach minimizes the attack surface and aligns with enterprise security expectations by avoiding public endpoints and enforcing least-privilege access patterns.

End-to-end integration with MASV

MASV serves as the entry point for all file uploads in this architecture. Through its S3 integration capability, MASV writes uploaded content directly into the configured S3 bucket. This eliminates the need for intermediate storage or transfer services within AWS.

After the file is written, the rest of the pipeline is entirely automated. S3 generates the event, SQS buffers it, Lambda processes it, and the LucidLink API registers it. The result is that files uploaded via MASV appear in LucidLink with minimal latency, backed by S3 storage.

This integration allows organizations to combine MASV’s high-performance file transfer capabilities with LucidLink’s real-time file access model, using AWS as the orchestration backbone.

Assumptions, preconditions, and related

The above assumes that the reader has the following already in place. The architecture described herein can either use existing aspects, or the reader may create new items dedicated for this purpose:

- Amazon S3 bucket identified specifically for the hosting of objects that are ingested from MASV Portal(s) and available to LucidLink users.

- A MASV Portal with the appropriate Amazon S3 integration linking to the S3 bucket.

- An AWS VPC to contain the networked components.

For related information regarding a secure way to ingest files to a LucidLink Filespace, visit How to connect MASV with LucidLink.

Conclusion

This architecture provides a cloud-native solution that enables files uploaded to AWS S3 storage via a MASV Portal to be automatically ingested and made accessible within a LucidLink Filespace. By leveraging S3 event notifications, SQS buffering, Lambda processing, and ECS-hosted APIs, the solution achieves near real-time file availability while maintaining strong decoupling between components.

The key value lies in the clear separation of concerns: MASV handles ingestion, S3 provides durable storage, AWS orchestrates the workflow, and LucidLink Connect APIs deliver user-facing access. This modular design enables flexibility, scalability, and alignment with enterprise cloud best practices.